The safety layer inside every AI conversation.

Guardian catches every message in real time, recognizes when someone is in distress, and responds with the right resource for the right person in the right place.

Clinically grounded. Human first. Built inside Ellis, Bonded by Baby, and every partner experience we ship - and shaped by the next organization to join us.

The gap between a filter and a person.

More than a quarter of adults are using AI companions for important health questions. But few AI chatbots have real escalation processes.

A generic moderation filter sees two unrelated messages. A person sees a crisis. The gap between those two things is where harm happens — and it's where most AI products in health and wellbeing have no answer.

Guardian is our answer.

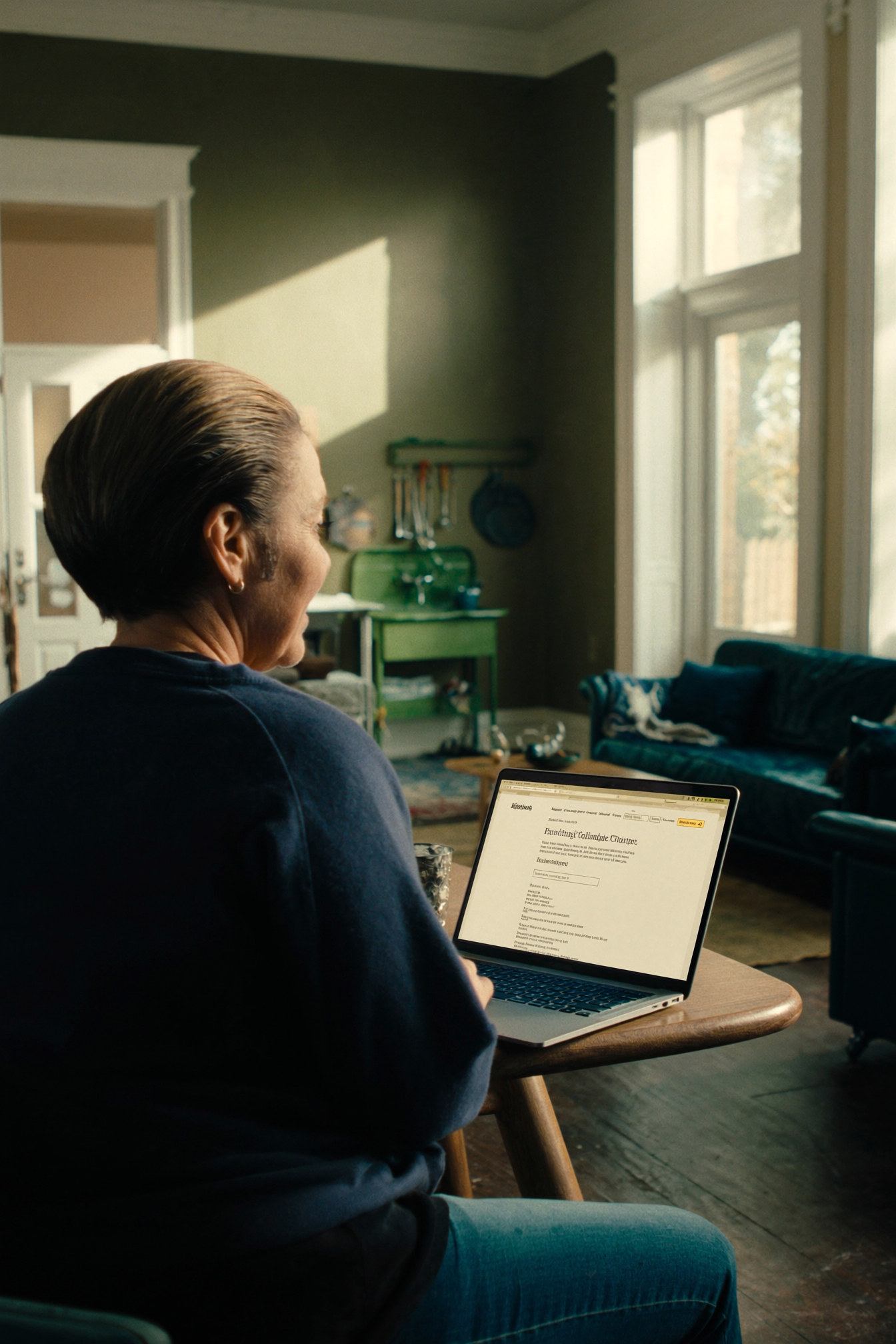

Guardian in action

A two-beat demonstration: first, surfacing crisis resources in the moment. Then, when signals repeat, enforcing a safety pause.

Five parts of a safety layer, built into the platform.

Each part has a specific job. Together they make every AI conversation we ship safer by default.

Real-time classification of every user message across 25 crisis and sensitivity categories — suicide, self-harm, abuse, eating disorders, and more. Reads the last three turns of context so patterns building across a conversation don't slip through.

Region-specific crisis resources surfaced at the moment they matter. Not a generic helpline buried in a footer — the right resource for the right person, in the country and language they're in.

Automated response to repeat risk. Escalating severity, temporary timeouts, and account suspension where appropriate. Built for when a user returns to the same topic again and needs more than a single intervention.

Cross-conversation pattern monitoring for partner teams. Aggregated insight into where users are struggling, which topics are driving crises, and where content or programme gaps need attention.

HIPAA-compliant reporting for partner boards, regulators, and clinical reviewers. Every Guardian decision logged with rationale, outcome, and timestamp — with data handling designed to meet HIPAA standards and support emerging AI safety regulations.

A simple flow, running on every message.

End to end in a few hundred milliseconds. Invisible when nothing is wrong. Decisive when something is. Every decision logged for the partner team.

Guardian is built into every Whitelabel partnership.

No separate contract. No separate line item. No integration work for our partners. Every AI experience we build with a partner — Ellis for Livestrong, Bonded by Baby with Social Creatures and Mount Sinai, and our wider partner deployments — runs on Guardian by default. It is the safety layer, and it is on by default.

Built with the people who understand this work best.

Guardian is developed in collaboration with partner clinical teams, not in isolation from them. Our taxonomy draws on established clinical frameworks. Our resource database is curated with partner clinicians. Our decision logic is reviewed by the organizations whose users depend on it.

We are SOC 2 compliant and actively undergoing HIPAA compliance. Data minimization, access controls, and human oversight are designed in from the start, not added later.

What we say — and what we don't.

- A safety layer that helps identify crisis signals

- A routing layer that surfaces appropriate resources

- An evidence layer that supports partner teams with documentation of reasonable care

- A default, not an add-on

- A clinician

- A diagnostic tool

- A replacement for human judgement, clinical pathways, or emergency services

- A guarantee — when someone is in danger, the right answer is still a human. Guardian's job is to get them there faster.

The regulatory ground is moving.

Regulators across the US, UK, and EU are moving quickly to require evidence-based safety measures in conversational AI. For nonprofits and health organizations, safety is no longer optional and cannot be retrofitted. Guardian is how Whitelabel partners meet that bar from day one.

Guardian grows with every partner we work with.

We don't build safety from a boardroom. Every new category, every new region, every new resource in Guardian has come from a partner organization bringing us a specific user need we hadn't yet seen. If your organization supports people through something hard, we'd like your voice in the next version.

Start with a conversation

A pro-bono working session to understand who you serve, what your users are telling you, and where Guardian could fit into your work.

Co-design the safety layer

We adapt Guardian's categories, resources, and escalation rules to your clinical context, your audience, and the regions you operate in.

Ship together, learn together

Deploy as part of a Whitelabel experience. What we learn in your deployment strengthens Guardian for every partner that follows.

Does your organization work in an area Guardian doesn't yet cover?

Guardian's categories, resources, and clinical framing are shaped by the partners using it. If your users are living through something we haven't built for yet — tell us. That's how this gets better.

Start a conversationHelp shape how AI keeps people safe.

Whether you're a nonprofit supporting people through grief, a hospital system exploring AI for maternal care, or a charity working with young people — if your mission involves conversations that matter, we'd like to build with you.

Partners help shape Guardian's categories, resources, and clinical framing for the people they serve. You bring the context. We bring the platform. Guardian grows for everyone.